Washington DC 13 APL 2023

This is part #3 of a 3 part introduction to the unique challenges go military innovation. Click here for Part 1 and Part 2. Or alternatively view the entire presentation in one file at the Overview.

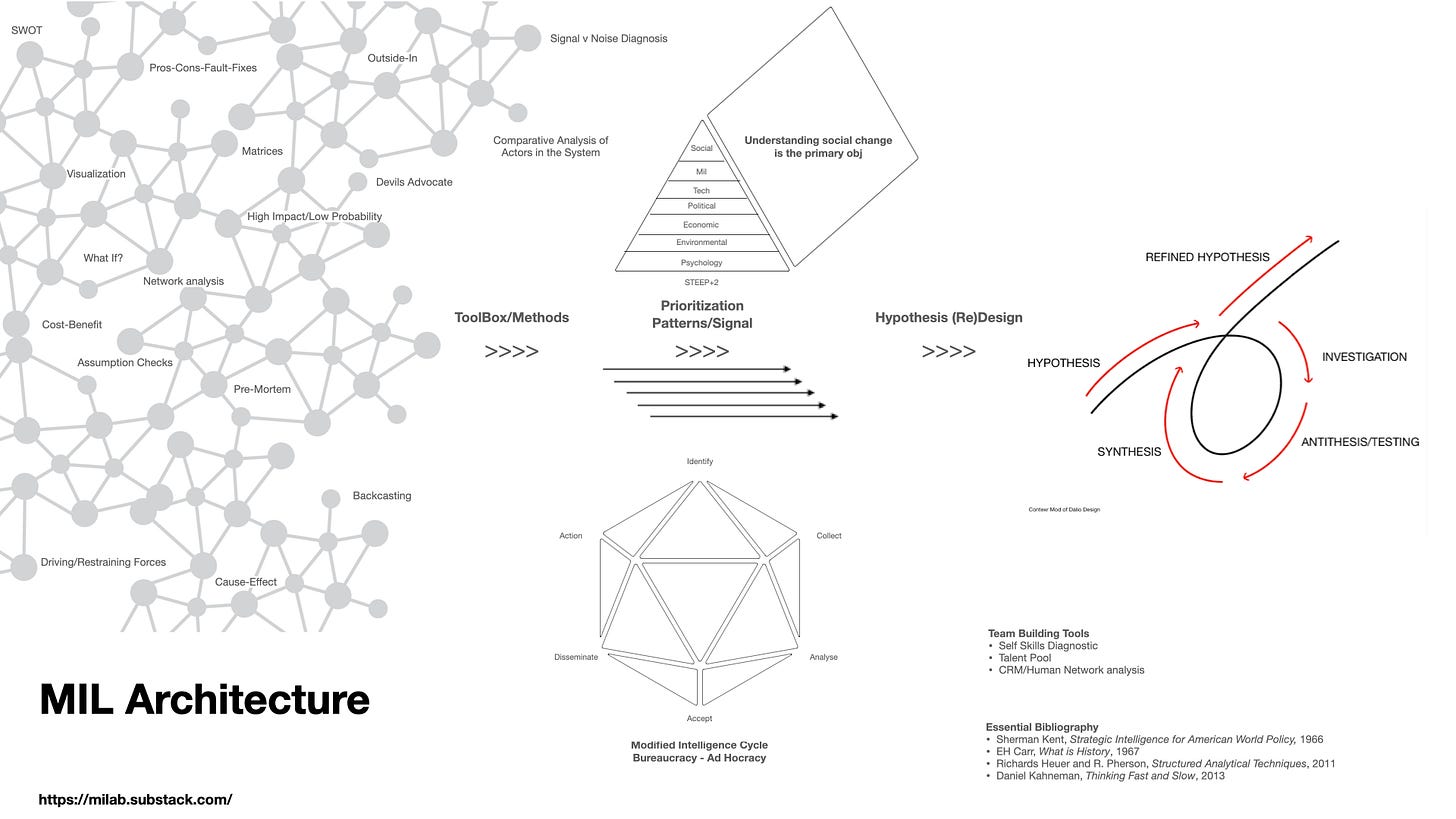

Innovation = Possibilism + Adhocracy + Methodology

In explaining why technology ≠ innovation, we proposed an alternative equation that better captures the complexity of military innovation that is so different from innovation in other professions. Innovation = Possibilism + Adhocracy + Methodology.

It really should come as no surprise that innovation in the world’s most complex system of systems is itself, complex and multi faceted. In this article we will explain the equation and how it fits together to position thought leaders to move their organizations forward and thereby avoid strategic surprise.

Military innovation is dependent on the following cultural, organizational and ideational foundations:

Possibilism

Open Mode thinking

Closed Mode execution

Adhocracy

Organizational flexibility

Bureaucracy to adhocracy

Methodology

War is a social activity involving Wicked problems in a VUCA world

Ideational agility

‘Why’ / ‘How’ alignment

Technology

"The pessimist complains about the wind. The optimist expects it to change. The leader [possibilist] adjusts the sails.” -John Maxwell

Possibilism

Pessimism and optimism are emotional states. Possibilism sees the world as it is, not as we wish it to be. It is concerned with unrestricted curiosity-driven, reason-based problem-solving. Possibilism looks for ways to find advantage in chaos. It demands challenges are examined for opportunities. Possibilists have "a worldview that is constructive and useful. [They] neither hope without reason, nor fear without reason.”1 Possibilist organizations are designed to deliver optionality to decision making via a thorough analysis of the context around an opportunity. It is interested in disproving ‘no’ as an answer.

Any organization with a default position of 'no' is not a thinking organization. Default “it cant be done” non-thinking is easy. It doesn’t rock the boat. Organizations that are intent on listing all the reasons something cannot be done, typically never bother to thoroughly check all underlying assumptions, circumstances, actors interests/motivations, and methods of analysis, to find new opportunities. They leave themselves exposed to the worst thing that can happen - surprise.2

Possibilism seeks lines of operation in the tension between circumstances (realism) and action (ideation). EH Carr persuasively argued, realism without new ideas is barren and prohibits change. Of realism he said that it lacks "a finite goal, an emotional appeal, a right of moral judgement and a ground for action" and reminded the reader that without ideas "passive contemplation is all that remains to the individual".

Possibilists use a methodology that critically reexamines everything we think we know to find new contexts (situations, associations or relationships) that can help create constructive solutions. In the world of grand strategy and military operations, things are constantly changing in ways that are not always obvious ‘to the naked eye’. Every actor in the international system is comprised of three parts - the people, military, government (and are subject to “passion, chance, and reason”). Each of these constituent parts are also subject to “fear, honor, [and] interests”. Within a state, what the people may fear, honor, or perceive as in their interest, could be at significant variance from the government’s estimation of these factors, and the military separate again from the people and the government3. Changes in these factors may be obvious. More often they are not. A sudden coup attempt in a stable democracy, for example, will reveal tensions previously unseen, downplayed, or dismissed by conventional wisdom that failed to apply possibilist rigor to its thinking.

Possibilists do not accept a static view of the international system but constantly probe for alterations in these comparative balances of power within and between states and non state actors.

Adhocracy

First coined by Alvin Toffler, adhocracy is the agility and flexibility of organizations to rapidly adapt to changing circumstances. While some functions will be necessary no matter the context, pop-up challenges, whether they are of short or long duration, require organizations to create ad hoc groups of experts. Adaptation does not mean entire organizations change all the time. But it also does not mean new challenges are shoehorned into old structures that are not fit for purpose. This may not always be obvious when something new comes along. So long as organizations can admit when the fit is not right and creatively build structures with the right people, funding, and support systems once needs are identified, they will have a much greater chance to meet rapidly changing environments.

This may seem obvious and even a motherhood level observation, but organizational rigidity is as common as it is counterproductive and potentially dangerous. If something new occurs in the environment and it does not accord with extant structures and their ways of perceiving the world, surprise is usually not far behind. Ossification is a self-reinforcing invisible malady.

“New” does not necessarily mean glaringly obvious change. It can be something as deceptively simple as changed combinations of old thoughts and/or actions that go unnoticed because they are subtle shifts, not revolutions. It may take time to recognize that something not longer fits and requires adjustment. Ossified organizations will not be open to change and/or not in “receive mode” to even understand a change has occurred. Therefore there is advantage and opportunity for those that can reorganize either to respond to change or better yet, to create it. It is a vital antidote to 'we’ve always done it that way’. Who dares, wins.4

Methodology

War is a social activity involving Wicked problems in a VUCA world (volatility, uncertainty, complexity and ambiguity). A mastery of the humanities and comfort with insoluble ambiguity are necessary and essential for strategic and military innovation.

Mathematics, engineering, and “social sciences” are unable to resolve the big issues of war and warfare.5 They provide valuable data to assist in thinking about wicked problems but they collapse in the face of ambiguity and/or the unknown (known or unknown, unknowns).6 A failure to know how to understand and analyse people and societies will lead to certain failure in war.

Horst Ritter and Melvin Webber wrote an article in 1973 that was to become a classic. They explained how some problems, particularly those involving human interaction, are so wickedly complex that they defy solution. Tame problems live in the world of the hard sciences, where issues are quantifiable: they can be measured, compared, contrasted, analyzed and solved. Tame problems are not necessarily simple. Tame problems can be incredibly complex - like how to fly a spaceship to Mars and back.

Wicked problems cannot be quantified with an accuracy that permits them to be solved like an equation. How do we measure the will power of the Taiwanese people to resist a Chinese invasion in advance of the event? The power of an individual mind, let alone millions of minds pulling in all directions, cannot be completely known with mathematical accuracy. Not even the most powerful psychometrics can deliver insight into mass psychology at a level needed to make a decision to go to war with total confidence in its outcome.7 Not even AI can solve this challenge. This is why understanding the unique character of wicked problems is important in strategy and its innovation.

Tame problems can be solved by following established rules. Wicked problems can not.

Chess has a finite set of rules, accounting for all situations that can occur. In mathematics, the tool chest of operations is also explicit. There are conventionalized criteria for objectively deciding whether the offered solution to an equation is correct or false. They can be independently checked by other qualified persons who are familiar with the established criteria; and the answer will be normally unambiguous. In solving a chess problem or a mathematical equation, the problem-solver knows when he has done his job. There are criteria that tell when the, or a, solution has been found.

Not so with wicked problems.

As distinguished from problems in the natural sciences, which are definable and separable and may have solutions that are findable, wicked problems - those dealing with big complex social systems - are not 'solveable' in the way maths or chess have set identifiable solutions.

Wicked problems-are ill-defined; there are no true or false answers. There are no criteria which enable one to prove that all solutions to a wicked problem have been identified and considered. There are no classes of wicked problems in the sense that principles of solution can be developed to fit all members of a class. Social problems are never solved. They rely upon elusive political judgment for resolution. At best they are only re-solved--over and over again.

In the sciences and in fields like mathematics, chess, puzzle-solving or mechanical engineering design, the problem-solver can try various runs without penalty. With wicked problems every implemented solution is consequential. Every wicked problem can be considered to be a symptom of another problem.8

Sherman Kent, the father of strategic intelligence analysis intuitively understood wicked problems and the limitations of maths, engineering and technology to solve them. The US intelligence system cost $90bn in 2022. It collects and listens to virtually every transmission that takes place on this planet. Yet it does not know China’s intentions for Taiwan or if Putin will use a low yield nuclear weapon. In fact, throughout history it has frequently been surprised and has gotten most of the big calls wrong. In part, this has been because the challenges it seeks to address are not soluble with mathematical precision.

As far back as 1965 Kent observed

Whatever the complexities of the puzzles we strive to solve, and whatever the sophisticated techniques we may use to collect the pieces and store them, there can never be a time when the thoughtful man can be supplanted as the intelligence device supreme.9

General Colin Powell famously set the following guidelines for his intelligence briefers:

Tell me what you know. Tell me what you don’t know. Then tell me what you think.

Even with the exquisite $90bn pa intelligence system at their disposal, under the circumstances of information overabundance, the list of things a good analyst does not know will always be much longer than the things they do “know” - meaning as a certainty. The old saw is true - the more you know, the more you realize how much you don’t know. Therein lies terrible dangers in the strategic dimension.

Powell set these boundaries so he could gain an insight into just how wicked a problem he was facing. Wicked problems require informed judgement. There can be no certainty. It is impossible to be certain all information is at hand or they have been weighted correctly and the best lens of analysis applied.

A classic example of this is the Cuban Missile Crisis. This was the greatest strategic challenge in world history. It is terrifying how much we did not know about the crisis at the time and how close we came to total annihilation. Not because of what we knew, but because of what we didn’t know and how we analyzed the problem.

The Joint Chiefs of Staff were pushing Kennedy to order an attack on the island before the strategic missiles were armed and fueled. However, neither President Kennedy nor the Joint Chief’s knew that there were 80 “tactical” warheads mounted on cruise missiles on the island - separate from the strategic missiles at the heart of the crisis.

An American assault would have been met with up to 80 Hiroshima sized bombs on the US base at Guantanamo Bay, approaching naval ships, including amphibs filled with thousands of Marines. That would have escalated into global MAD. The cruise missiles were the ultimate unknown, unknowns.

So the first rule of military innovation methodology is “Beware of simple solutions”. In a world beset by volatility, uncertainty, complexity and ambiguity (VUCA), comfort with insoluble wicked problems is necessary and essential for strategic and military innovation. These challenges require an open mind, ideational agility, self awareness, awareness of the interconnectedness of problems, an appreciation for the limits of the known and unknown possibilities involved, and a concomitant sensitivity to the potential for unintended consequences.

Future posts will explore methodology issues in more detail. It is the most critical element to get right in order to unlock genuine innovation. There is a lot of “faux” social science happening in Ops rooms around the military. Remember in Iraq the frequency of garbage collection was supposed to indicate whether the US was winning the war? The issue was not the garbage on the streets. It was the garbage that passed for “metrics” taken to indicate whether the US was “winning” or “losing”. The ignored metric at the heart of the strategic problem was the one no one was willing to measure because it was not politic to do so - the degree to which American forces were perceived as occupiers by the locals.

Ensuring alignment between the “why” and “how” of any challenge is not simple or easy. If innovation was easy we would be much further down the road than we are today. It certainly is possible, but it is much harder in the strategic and military context than any other.

The Military Innovation Lab aims to explore these questions and provide techniques and examples of best practice so that we can deter, defend and defeat future threats to US interests against all enemies.

Update - 8 AUG 2023 - “Metrics Gone Wild: The Military’s Dangerous Obsession”

A great short piece by “Doctrine Man” on some of the themes discussed above.

Hans Rosling coined the term possibilism. His thinking is similar to the great International Relations theorist EH Carr, who in The Twenty Years Crisis, was critical of both realism and idealism. Carr argued for an essential interplay between realism and idealism, where the former ensures analysis starts with the world as it is, and the latter provides a basis for imagining alternative worlds and provides a creative platform for reform. As reform hypotheses are developed they are then subject to evaluation and refutation by interrogation grounded in realistic knowledge of the world. Thus a never ending cycle of ‘what might be’ is refined by the limits of ‘what is’ and vice versa.

MIL just listed some of the techniques of Red Teams, another future topic of the Lab.

Often, government’s key fear is of their own people. Military’s too can feel threatened by the people or the government. The point is to assume synchronized alignment between the people, government and military would be a huge mistake and that careful analysis of the gaps is required on a continuing basis as these relationships shift. Equally, each is conditioned by chance, passion and reason. These concepts come from Thucydides and Clausewitz.

The motto of the SAS.

There is no such thing as social sciences. The term is a misnomer. Humanity is not subject to immutable laws. It turns out that neither is advanced physics! That philosophical discussion is for another day but it is important to flag here as it will keep coming up.

It is a real thing. Another example why the military world is not like any other.

Social control is a hot topic in the commercial and political world. Psychometrics “is a scientific discipline concerned with the question of how psychological constructs (e.g., intelligence, neuroticism, or depression) can be optimally related to observables (e.g., outcomes of psychological tests, genetic profiles, neuroscientific information)”. They have been used with exquisite effect by social media platforms to manipulate whole populations. Their rules and processes are subjective and imperfect. Algorithms are “If this, then that” (ITTT) rules that are only as effective as the mind of their creator. Society cannot be controlled with perfect precision. It cant even be measured or assessed beyond certain limits of psychology to ‘know’ its subject.

Horst Ritter, Melvin Webber, Dilemmas in a General Theory of Planning, Policy Sciences, 4 (1973) pp.155-169. I have used the original text only but reformatted it for style and brevity.

Sherman Kent, Strategic Intelligence for American World Policy, preface to the 1965 edition, p.xviii.